Concurrency in Java: The Big Picture

We have been playing with multithreading in Java for some time and would like to share our findings, and how they have changed the way we understand the concurrency model.

Please understand that we are no experts on this. When we started, this is what we knew:

- Creating threads is expensive, which is why all web servers come with a thread pool baked in.

- Most systems use an

Executorto distribute work among threads. FutureandCompletableFutureare ongoing, asynchronous tasks that return a value (or throw an exception) at some point.

If you are vaguely familiar with concurrency in Java, this could be your own baseline.

These days we are migrating some technologies while moving to Kubernetes, and decided to write down what we learned from the perspective of "consumers of libraries." While we always track performance, it was not a big priority – we found our concurrency model just by following the rabbit hole.

Your web server includes a connection pool

We use an embedded Jetty, which includes a connection pool for incoming requests. Receive a request, prepare the contents, return a response. Do you want to see a plain, no-brainer diagram?

Don't worry, it gets better.

We are living in the Future

We are introducing several client libraries from Google: Firestore, Cloud Storage, PubSub, a couple more. All of them share this in common:

- You can provide an

Executorduring the initialization. - Most methods return a

Futureand provide with no synchronous alternative.

These are all features provided by GRPC, which these products use to communicate with their respective servers. GRPC delegates any blocking connection to a different executor, to avoid blocking the current thread.

Let's see this with an example:

public class UserController {

public static void getUser(Context ctx, String userId) {

// 1. Retrieve the document from Firestore

ApiFuture<DocumentSnapshot> documentFuture = firestore.collection("users")

.document(userId)

.get();

// 2. Transform the document to JSON

ApiFuture<JsonUser> userFuture = ApiFutures.transform(

userFuture,

document -> document.toObject(JsonUser.class),

MoreExecutors.directExecutor()

);

// 3. Wait for a result from the future

JsonUser user = userFuture.get();

ctx.json(user);

}

}

Your can switch to CompletableFuture instead of Future for an experience closer to a JavaScript Promise, the idea remains mostly the same.

Did you notice the problem? On step three: userFuture.get() blocks the current thread on the web executor to wait for a response from GRPC. We have a thread waiting for another thread to complete.

How bad can this be? In our experience, a lot.

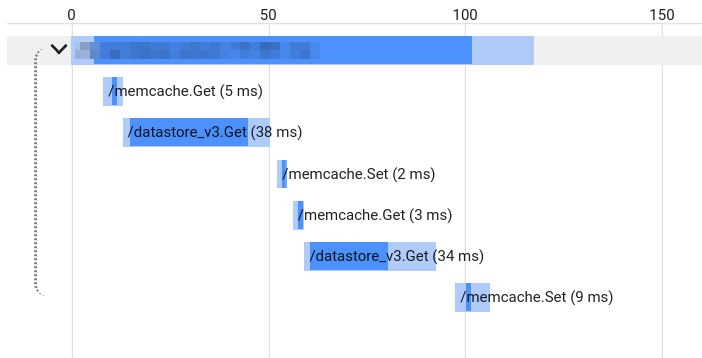

Our current application is waiting 80-90% of the time for either Datastore or Memcache. The following screenshot is a sample request where our web server was waiting 90ms (out of 120ms) to deliver a response.

This inter-thread lock affects the system when under stress, and typically would not be visible on your monitoring metrics unless you know what to search for. You may notice that memory usage goes up, but not the CPU, and assume this is just how Java rolls. In the meantime, more than half of your threads are there, waiting.

The trial-and-error patch would be to increase the thread pool size until the CPU usage goes up, and throw some RAM on top. But there are better options.

Return a Future instead of a JSON object

We started recently to serve Futures using Javalin. In our example, replace step three with the following:

// 3. The web framework understands a CompletableFuture

ctx.json(userFuture);

The web executor is not blocked anymore, and returns a Future immediately. But then, who is responsible for rendering the JSON response?

Running transformations on the current thread

Step two in the example above is using DirectExecutor to run the final stage and transform the document into a JSON object:

// 2. Transform the document to JSON

ApiFuture<JsonUser> userFuture = ApiFutures.transform(

userFuture,

document -> document.toObject(JsonUser.class),

MoreExecutors.directExecutor()

);DirectExecutor executes the provided lambda in the current thread. Its use is generally frowned upon because there is potential for creating deadlocks, so we are limiting its use to simple transformations between object types.

In this case, the executor running the last stage (GRPC executor) is responsible for doing the final transformation and serializing the response to the browser. You may want to switch back to the web thread pool instead, by specifying a different executor for that last stage.

The road ahead

We are not done yet. We still need to find the type and size of our executors through trial and error, and optimize the security bottleneck to retrieve permissions before executing actions. Still, at least now we know what we are searching for.

How is your experience with concurrency? Anything that you do differently? Happy to hear your thoughts at koliseoapi on Twitter.